Video Revisited

With the keyboard all hooked up I now had a way to enter some simple basic programs and give FPGABee a bit of a workout to see what worked and what didn't.

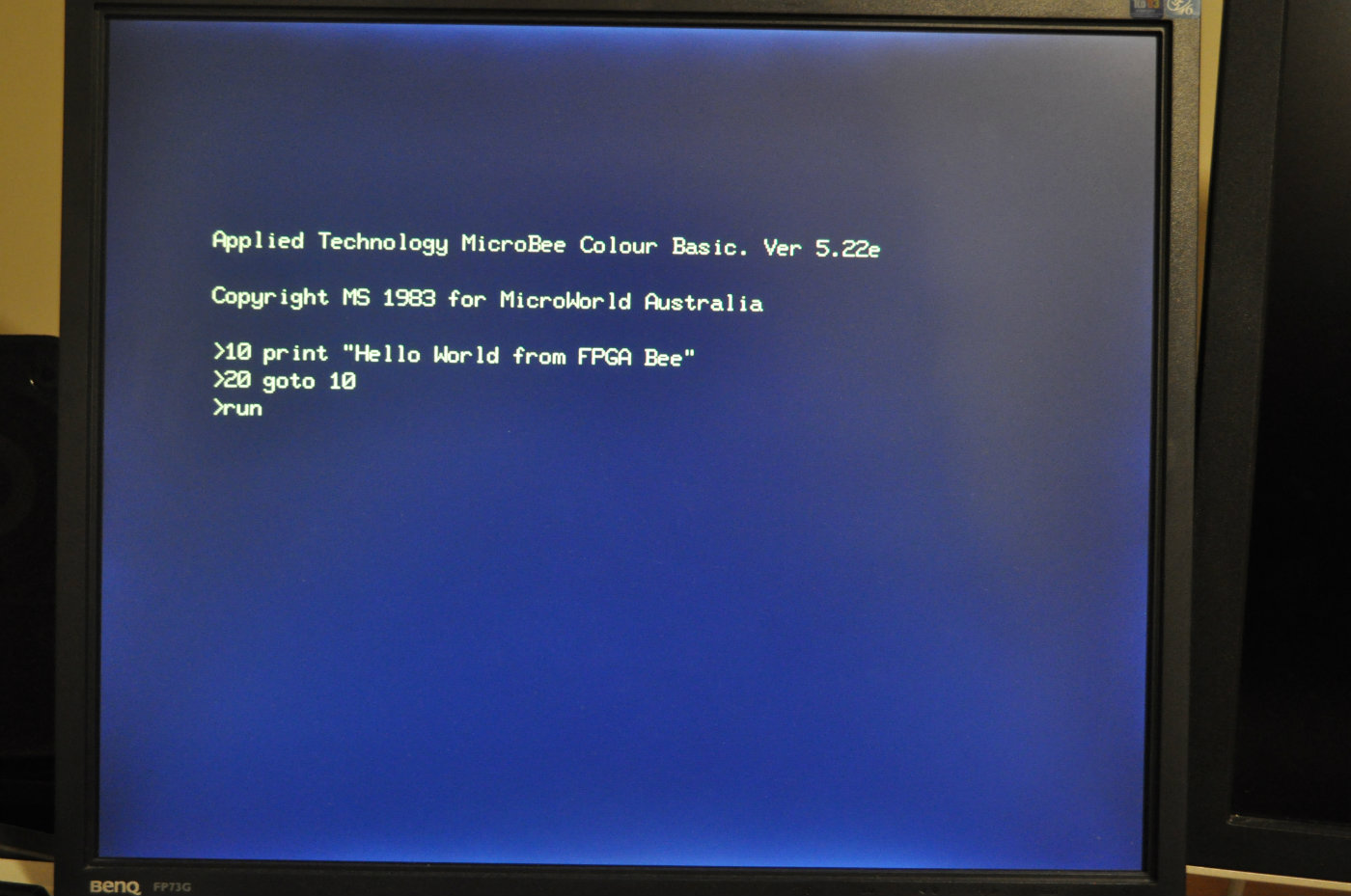

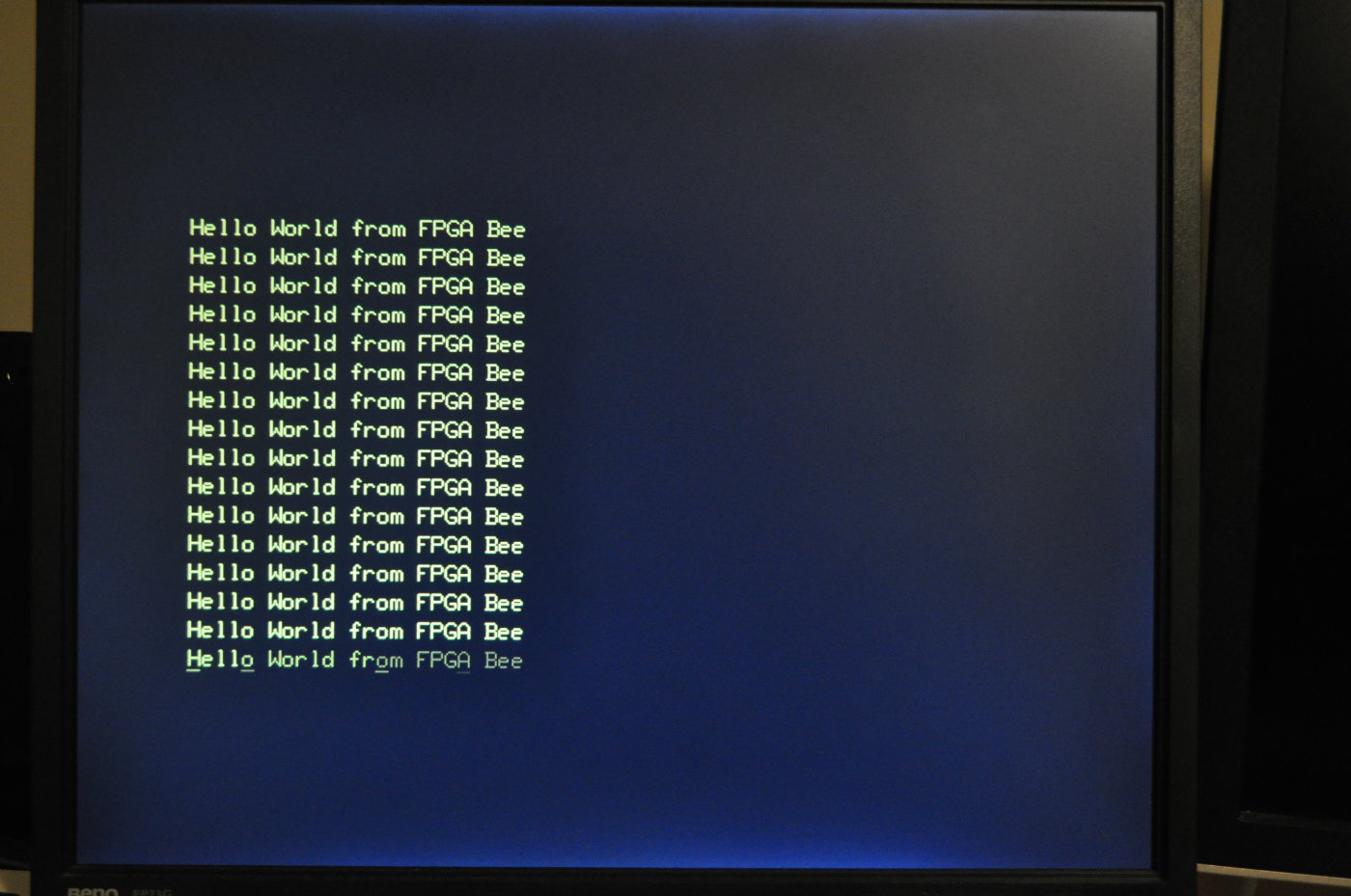

Hello World

First up, the obligatory Hello World program:

which worked just fine:

So far, so good.

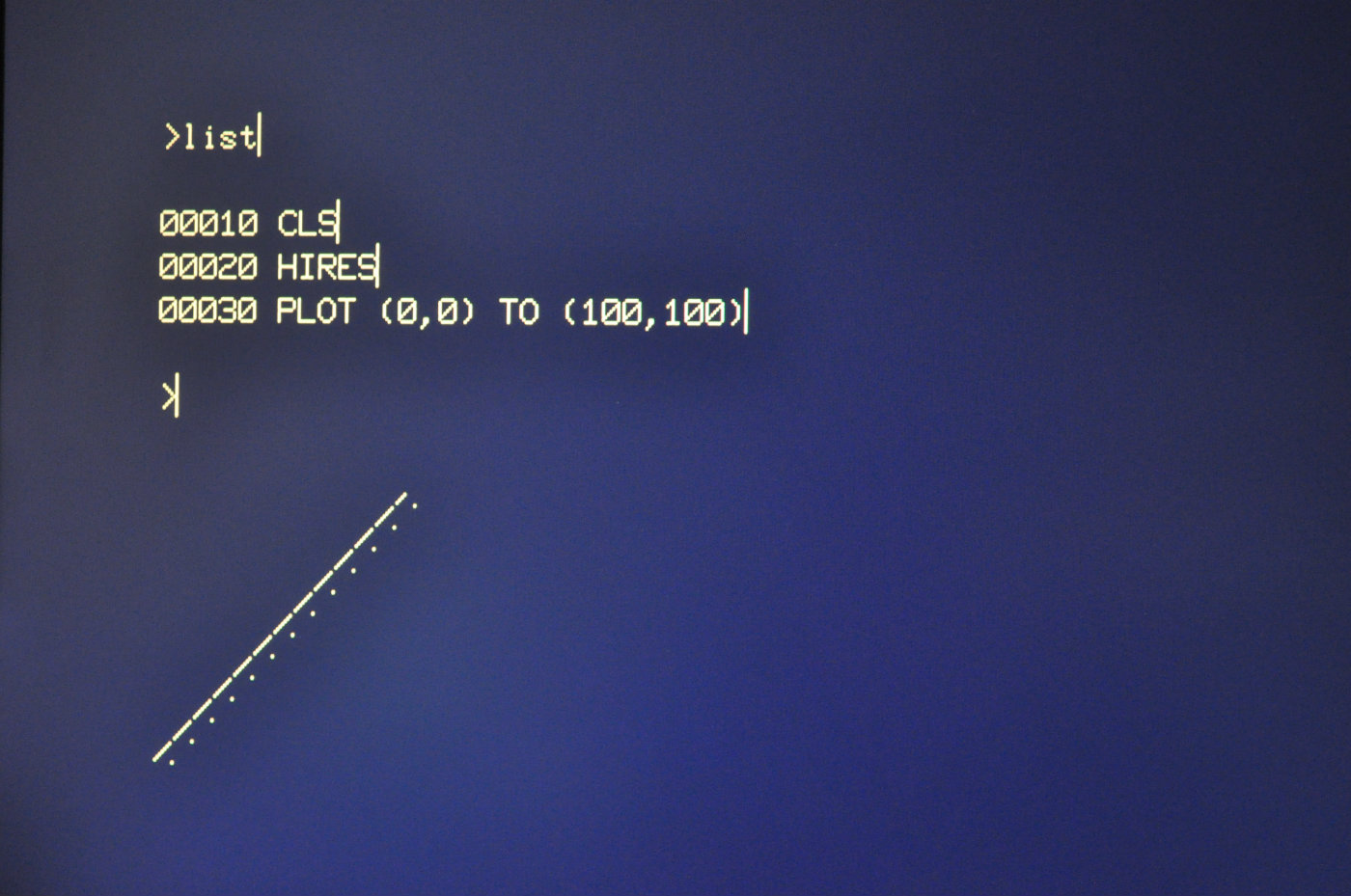

Hi-res Graphics

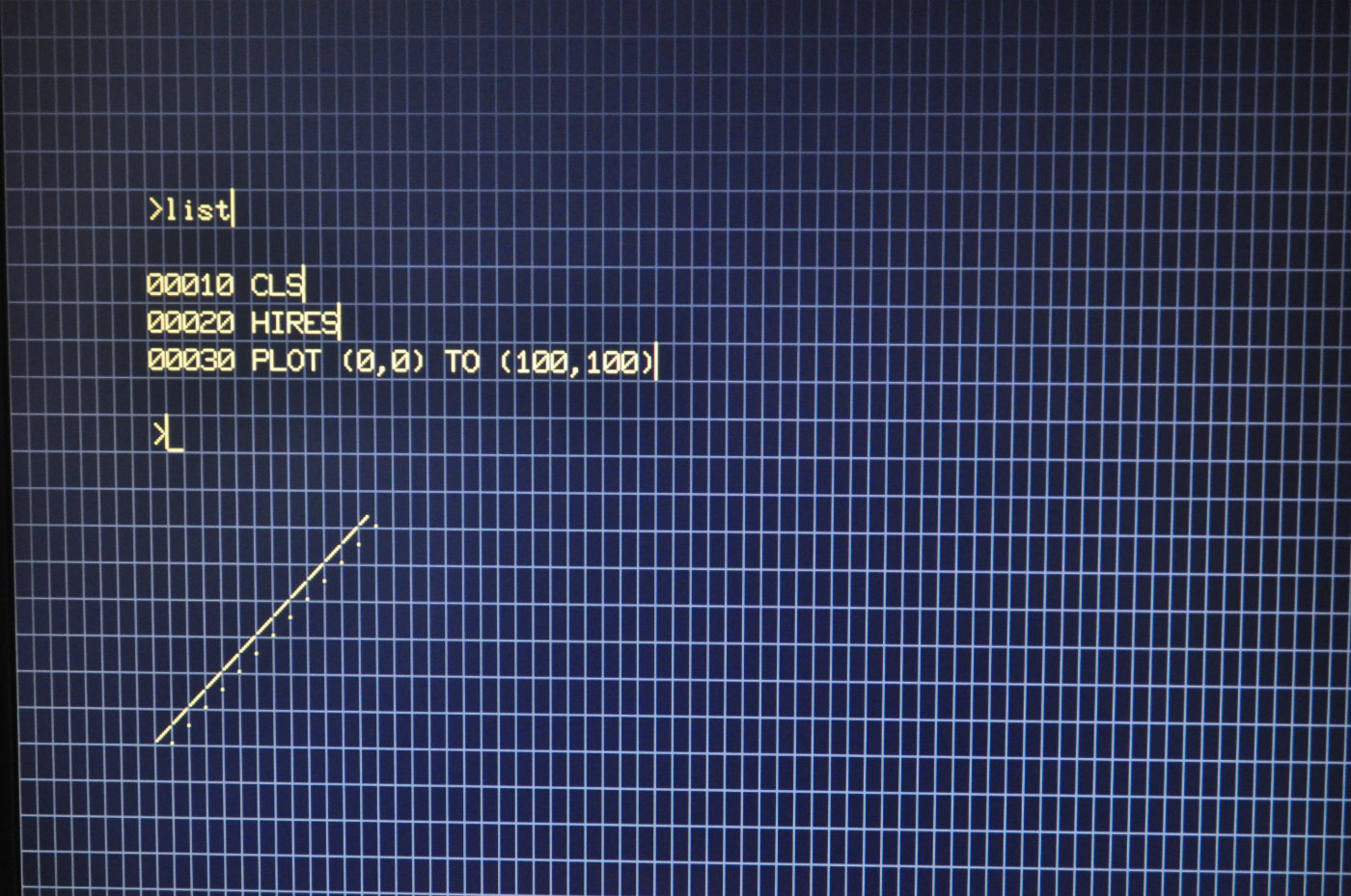

In the article about the video controller I mentioned that there were timing issues and that I'd hacked it to get something working - which so far it had worked fine. Once I tried using the PCG graphics however, things fell apart:

You'll notice two problems here:

- A weird vertical bar at the end of each line.

- The plotted line doesn't really qualify as a line.

I couldn't really make much sense of what I seeing. It looked like some of the characters had a pixel from the left side of the character cell wrapped to the right. Even after a few rounds of running different Basic programs and different builds of the video controller I wasn't really understanding the problem.

The main problem in debugging this was I couldn't tell which pixels we're being wrapped to which character cells so to help get handle on it I updated the video controller to be able to show grid lines on the character cell boundaries.

Pressing F12 in FPGABee Toggles Character Cell Gridlines

Some more experiments and finally it clicked. It all came down to timing of the clock cycles in the video controller. In order to work out what to display in any given pixel, two memory look-ups are required:

- Get the character code of the character to be displayed

- Look up the Character ROM or PCG RAM to work out the pixel for the character

In other words, for any given pixel the video controller is actually displaying the pixel from 2 clock cycles ago and everything is shifted to the right by two pixels. I thought I'd compensated for this already by delaying various values through registers, but I'd neglected one - the bit which selects whether to get the pixel from Character ROM or PCG RAM ie: the high-order bit of the character.

The behaviour shown above came down to two issues:

- On any boundary between a PCG and character ROM character, one column of pixels was getting selected from the wrong place. This explains the weird vertical bars. On entering hires mode, the screen is filled with character 128 - a PCG character. As text is printed to the screen a boundary between PCG and non-PCG characters is created at the end of each line.

- Due to another subtle bug in timing, the X coordinates within a character cell were being shift by 1. This couldn't be seen with the regular character set because they all have blank pixels down the left/right side of the character cell but explains the distorted line plot.

In the end, I re-wrote a big chunk of the video controller coordinate calculations. Instead of delaying X-coordinates through registers, I calculate two sets of X-coordinates, the leading and following:

- the

leadcoordinate in the one the VGA controller thinks we're on, and the one we need to start the memory requests for - the

followcoordinate is the one we're actually sending to the monitor right now - the one we started the memory request for two clock cycles ago.

pixel_x_lead <= std_logic_vector(unsigned(vga_pixel_x) - 64);

pixel_x_follow <= std_logic_vector(unsigned(vga_pixel_x) - 64 - 2);

All the memory address calculations are derived from the lead coordinate, and the circuitry that determines the colour of the current pixel uses the follow coordinate. The only other bit that we need is the one to select whether the pixel comes from character ROM or PCG RAM. Because that can't be calculated by an offset, it's delayed through a register. It only needs to be delayed once - from the time the video ram request comes back to the time the pixel is rendered.

process (pixel_clock)

begin

if (rising_edge(pixel_clock)) then

pcg_select <= vram_din(7);

end if;

end process;

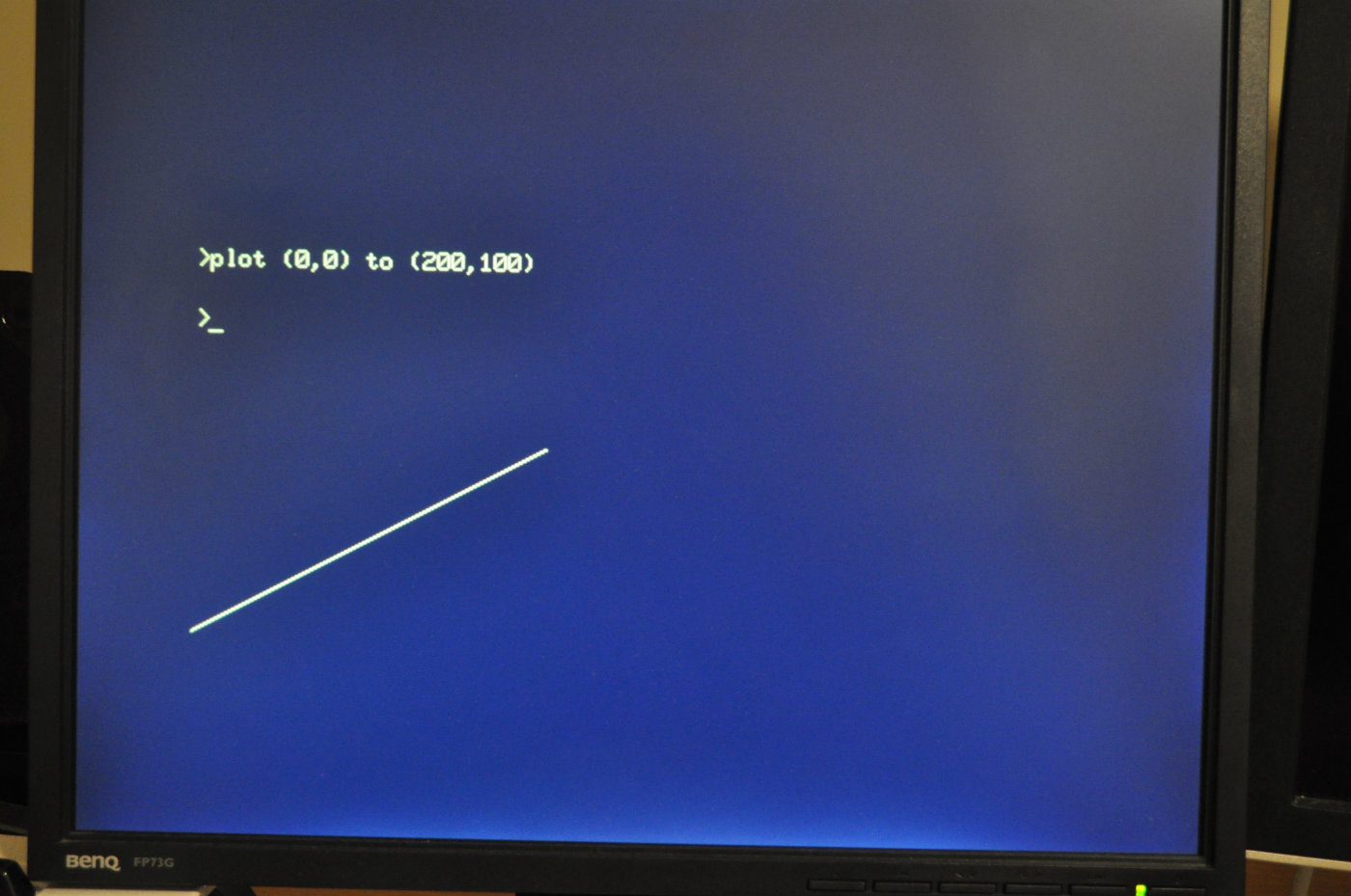

Problem solved:

The current solution is not perfect:

- All pixels are still shifted to the right by 2

- It relies on having "spare pixels" to the right of the screen area.

I'll have to revisit this again if I add support for 80 column mode since that will require the full 640 pixel width of the VGA screen. For now though it works just fine.

Keyboard Auto Repeat

After fixing the video controller I spent some more time writing simple Basic programs to exercise everything I could think of and pretty much everything worked - with the minor exception of the keyboard auto-repeat.

Auto-repeat is implemented by the Microbee's firmware (ie: Basic), not in hardware. For timing it doesn't use instruction counts or delay loops. Instead it relies on the vertical retrace signal from the video controller to give a time base that is not dependant on the CPU clock speed.

Since FPGABee's screen refresh rate is not the same as that of a real Microbee I needed to fake a vertical retrace signal. Digging through the source code for ubee512 (which has been an invaluable resource btw) I found it implements this as 50Hz signal with a 15% duty cycle. Sounds good to me.

-- Generate a fake retrace signal for CPU - 50Hz, 15% duty cycle

process (clock_100mhz)

begin

if (rising_edge(clock_100mhz)) then

if (reg_retrace_counter<to_unsigned(2000000,21)) then

reg_retrace_counter <= reg_retrace_counter + 1;

else

reg_retrace_counter <= (others=>'0');

end if;

end if;

end process;

in_retrace <= '1' when reg_retrace_counter < to_unsigned(300000,21) else '0';

The in_retrace signal is then made available to the CPU through the 6545 status register:

-- read status register

dout <= '1' &

reg_status_light_pen_ready &

in_retrace &

"00000";

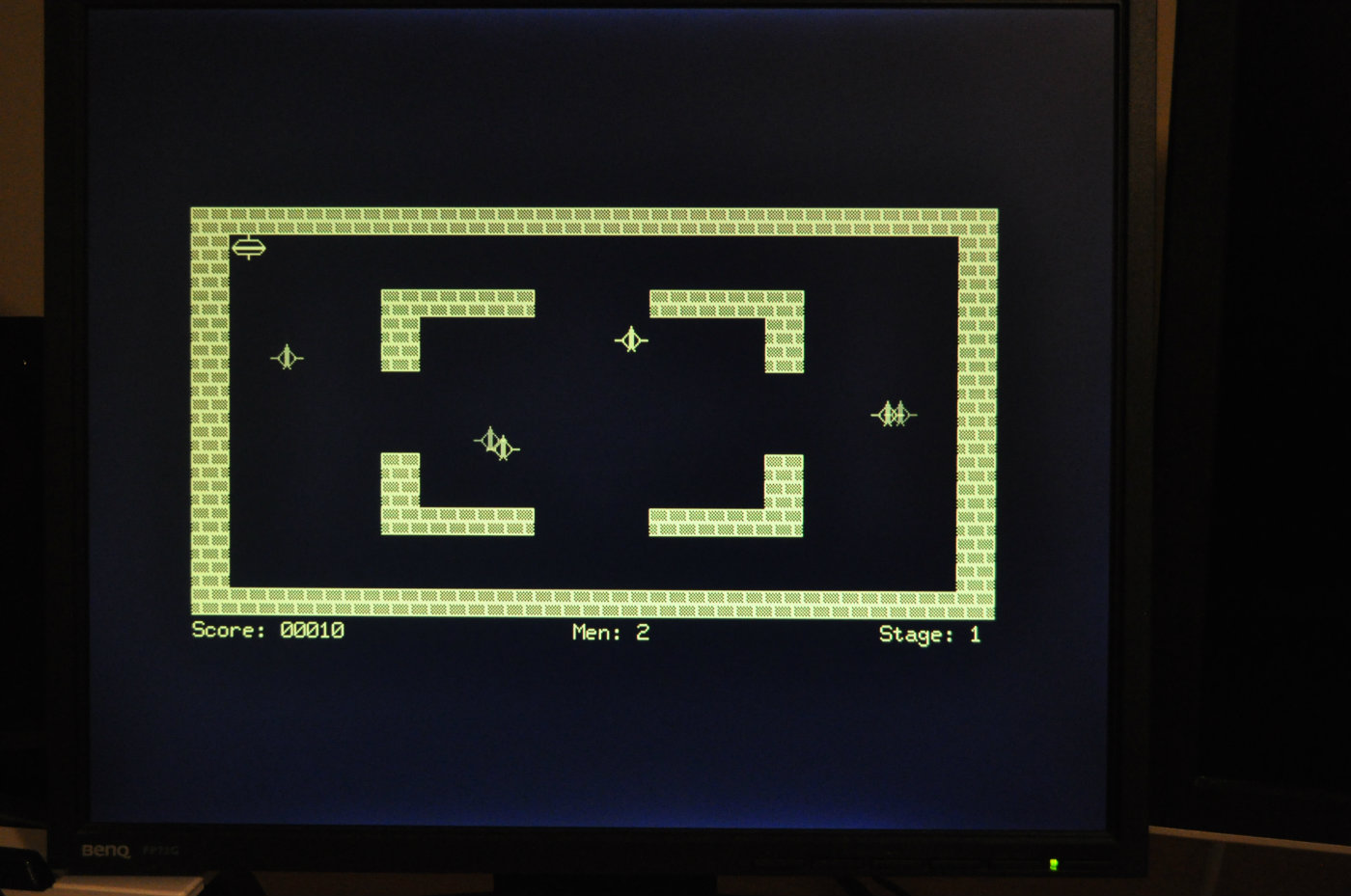

Robot Fire!

Finally, everything seemed to be working. Time to load some real software, which of course might as well be something I wrote - Robot Fire. Not wanting to wait for any sort of cassette I/O support I decided to build a ROM version of it. I knocked together a C# program that took the binary image and prefixed it with a little assembly language stub to shift it from ROM address space into regular RAM and then jump to it's starting address.

A hack to be sure, but it worked: